If you have spent time with FIM you know, and if you have not, you will soon learn that migrating a FIM Service configuration from one environment to another can be very difficult.

While Microsoft provides a procedure, some scripts, and some underlying PowerShell primitives to assist with the process, the suggested solution makes the assumption that you will be migrating an entire configuration, and that you do not need to manage multiple configurations. To be fair, what Microsoft provides is only a suggested starting point.

In this blog post, I will discuss a procedure that we have put together at CSS that improves the migration process. We certainly have not solved all the problems, but our hope is that you will find what we have done helpful and useful for creating your own FIM Service migration process.

If you read the TechNet article on FIM configuration migration it talks about backing up the databases, extracting the configuration for the FIM Service and Sync Engine, copying FIM Service workflow and Sync Engine extension DLLs, comparing the FIM Service configurations, and importing the FIM Service changes into the target system. Many of these steps are important (especially backup) but are not covered in this post. I am only going to talk about migration of schema and policy in the FIM Service itself. Please take the time to read the TechNet article (linked above and below).

To assist with the issues of managing multiple configurations, we have updated the PowerShell scripts to create and work from directories that contain individual configurations. By automating the creation of configuration related directories, we reduce the errors that can occur when manually copying and renaming files as is required when using the stock configuration migration process.

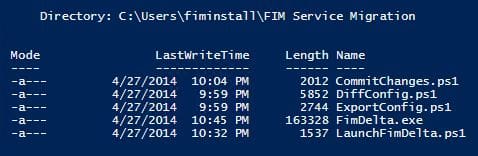

The first step is to obtain the scripts and delta tool. They are available here. Unzip the files into a directory called “FIM Service Migration” or similar. Do this on both your development and production machines (or whatever environments you are migrating between).

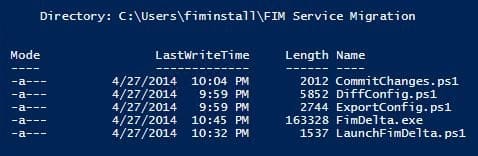

Next, run the ExportConfig.ps1 script in both of your environments. This script combines the functions of the original ExportSchema and ExportPolicy scripts and puts the resultant export files into a subdirectory named with the current date and time. Note that, as with the original Microsoft scripts, you need to run this as an account that is in the FIM Service “administrators” set. If you don’t, you won’t get a complete configuration export.

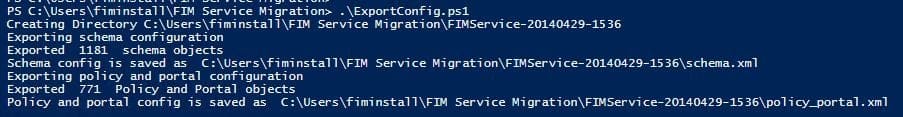

As the directory created by the ExportConfig script represents a FIM Service configuration for a specific environment at a specific point of time, you will want to rename the directory to something that is more meaningful to you. I would recommend at least including the name of the environment and either a timestamp or a version number. In this example, I will rename the above export (from the development machine) to “Dev-20140429” to indicate that this is the configuration from the development environment as of April 29. On the production machine, I will do the same thing but use the directory name “Prod-20140429.” Copy the directory from the development machine to the production machine so that both directories are on the production machine. On the production machine, you should have something like:

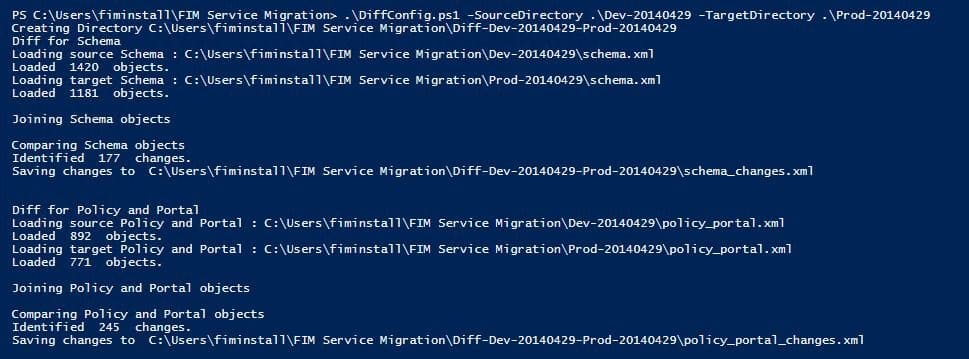

Next, run the DiffConfig.ps1 script. This script combines the functions of the original SyncSchema and SyncPolicy scripts. Instead of requiring you to rename the export files to pilot.xml and production.xml for each both schema and policy extracts (and requiring you to remember which files are which), the DiffConfig script instead takes the directory names from the previous steps as parameters. The SourceDirectory parameter is the new configuration that you want to apply somewhere (in our case the development environment) and the TargetDirectory parameter is the configuration that we want to apply the source configuration on top of (in our case production). We want to apply the source to the target (thus making the target look like the source).

The script will calculate what changes are necessary on the target and create a set of changes files. These files are placed into a new directory that is named “Diff-” followed by the names of the source and target directories.

The script uses the same techniques (FIM PowerShell automation cmdlets) as does the stock Microsoft scripts and has the same issues. If you have instances of objects that have non-unique display names or if you have created custom resource types, you may have to clean your data or edit the join rules inside the script (for that matter, the previous export process can have similar issues if there are invalid references in your configuration or if you have string values that “look like” references to the automation cmdlets).

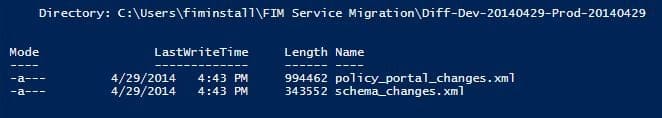

Looking in the resultant directory, we now have:

Other than the file names, these files are the same as the changes.xml files that the stock SyncSchema and SyncPolicy scripts would have provided. If we knew that we wanted to apply all the changes that were calculated, we could just apply these files to the production environment and be done.

What if however, we have things in the development environment that we don’t want to roll to production?

In our example, the development environment contains some extra resource types that were inadvertently created. In the FIM Service, once you create a new object type and use it, you cannot delete it, so this can become an issue if you do not want extra stuff in production. Our configuration also includes some sets with manual membership. The people placed in the development environment sets will not be present in production, so if we don’t do something special, the migration process will try to either create the development users in production or complain that it cannot find corresponding users.

Traditionally to correct this, you would have to open up the changes.xml file and edit out the objects that you do not want to roll to production. In addition, if you just wanted to see the exact changes that were about to be sent to production, you would have to hand inspect the changes file. Inspecting and editing the changes file is difficult and error prone as it internally identifies objects by their GUID style resource IDs and many objects contain references to other objects. Incorrectly editing an object or breaking a reference chain can lead to import failures later in the process.

About a year ago, a gentleman named Alex Skalozub posted a terrific tool at GitHub called “FimDelta.” This tool displays the contents of a changes file in a graphical tree structure and allows you remove items you do not want to roll to production. Changes can be excluded at the object or attribute level. The tool also easily allows you to navigate the references and relationships between objects. Even if you are not going to exclude objects from your production roll, it is a great tool for simply seeing what the changes are.

For some reason the tool does not seem to be in wide use. Most of our customers have not heard of it. It may be because the tool is a little difficult to launch as it requires you to copy and rename the source and target extracts files as well as the changes file to the directory the tool is installed in.

To make it easier to use and to tie it into our migration process, I have made some updates to the tool. I have added the ability to pass the file names it needs on the command line and added traditional “file” menus so that from inside the tool you can navigate to and open the files you need as well as specify the name and location of the output changes file. I have also added the ability to save and restore the “exclusions” or the things you do not want to migrate. This can save time if you need to do multiple migrations.

The updated tool is included along with the migration scripts in the above link and the updated source code is available on GitHub (as with all free stuff, use at your own risk, no warrantee, etc.).

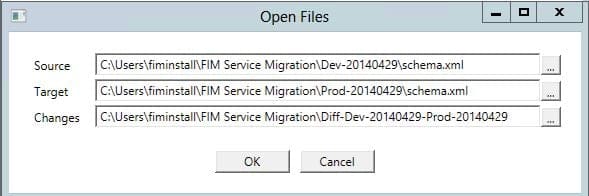

Let’s look at the tool and see how to exclude the schema changes we have in development, but don’t want in production. The LaunchFimDelta.ps1 script launches the tool and gives it the necessary file names. You will see that it takes the same parameters as the DiffConfig script plus one more. This means that if you had just run the Diff script, you can press the up arrow, edit the name of script, press the end key and add the category parameter. The category parameter takes either “schema” or “policy_portal” depending on which set of changes you are examining.

![]()

When the tool opens, you will see the “Open Files” dialog ready to go, just click OK. If you run the tool manually, you will need to specify the source and target extract files as well as the changes file generated by the diff process. Note that if you give the tool a set of files that don’t go together, it will likely crash.

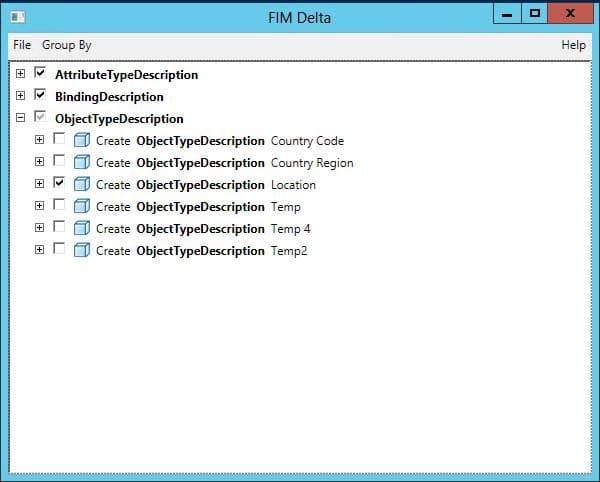

The details of how to use the tool is another blog post, but you can sort the changes by operation or object type and you can spend all sorts of time examining and filtering your changes file. For this narrative, we will exclude some unwanted object types:

You can see I have unchecked five resource types (object types) that we don’t want to roll to production and have left checked the one new resource type (Location) that we do want to send to the production environment.

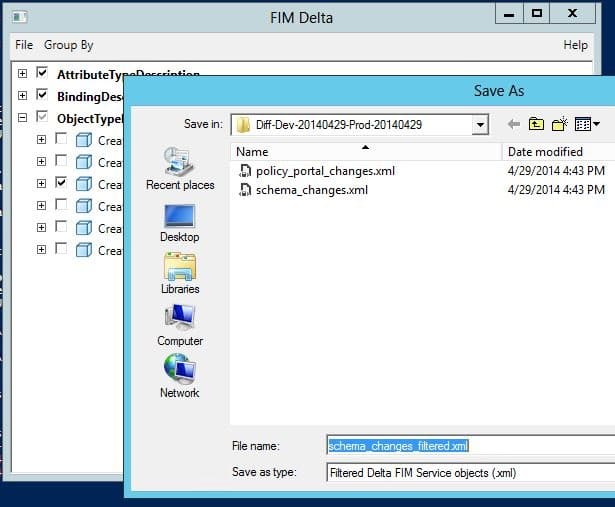

We will now save our changes (File, Save As). By default, the tool appends “_filtered” to the file name and puts it in the same directory that changes file came from.

Next, we will prevent the development users from being migrated to production. The next screenshot shows what the delta tool looks like after running the LaunchFimDelta script, this time with the policy_portal option:

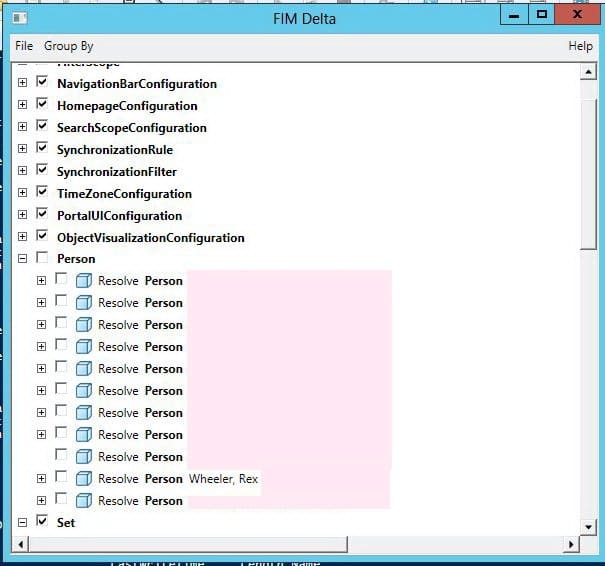

I have grouped the changes by object type and you can see examples of the various non-schema object types that are part of a FIM Service configuration. I have unchecked all of the “Person” objects because I don’t want to move any identities from development into production. You can see that the operation type is “Resolve,” which is the operation that the import process (coming up next) uses to align references to objects that don’t yet exist in the target environment. Since we are not moving users, we don’t want to bother trying to look them up.

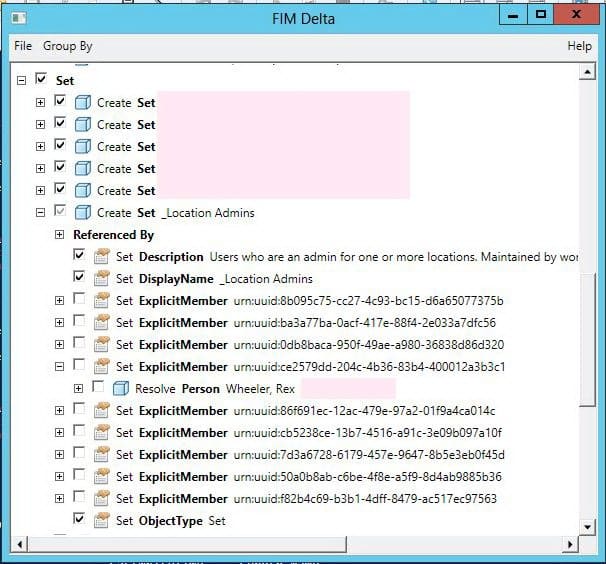

Scrolling down a bit, we can see some set definitions:

Here you can see that we are opting to create a set called “_Location Admins” but are not going to roll forward the actual membership of the set. In the changes file set members are stored as references, but the delta tool has the smarts to be able to show you what the references are. The screenshot shows that “ce2579dd-204c…” is actually me in the development environment. If I wanted to roll my account into production, I could have left my ExplicitMember attribute and the corresponding resolve action checked.

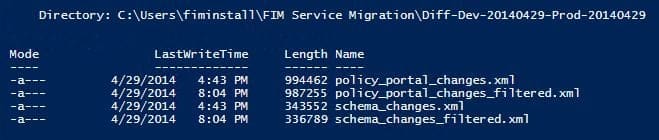

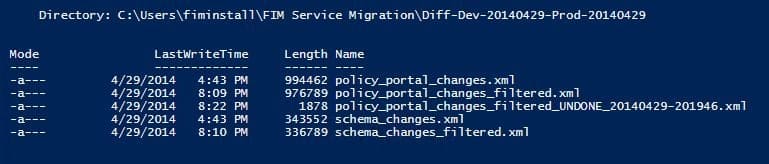

After saving, we have the following in our “diff directory:”

Now it is time to actually import the changes into production. To do this we use the CommitChanges.ps1 script. This script combines the functions of the original CommitChanges and ResumeUndoneImports scripts.

Note that the CommitChanges script does actually make changes to your configuration, so as with any configuration change you should make sure you have a good backup of the FIM Service database before you run it.

CommitChanges takes the changes file as a parameter. You would typically pass it the “filtered” version of the file that you just created with the delta tool. Here is the import of the schema, which runs cleanly:

![]()

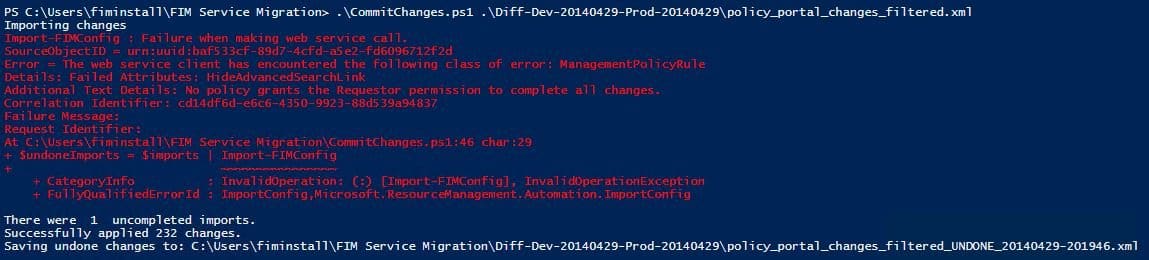

Sometimes the import does not run “cleanly”. In our case, we have an error when importing the policy:

In this case, we have used a feature that was added in a hotfix to FIM (the ability to hide the advanced search link in the portal). In the development environment, we have manually added the schema necessary to enable the feature and have created the management policy rule necessary to allow access to the new schema. These changes have not been made to production yet and by chance, the configuration option that holds the new setting is being applied before the necessary MPR that allows you change the setting. This sort of ordering error is a common problem with configuration migrations. The solution will be to try again for the changes that could not be imported.

Like the original CommitChanges script, our updated script outputs any changes that it could not send to the target system. Unlike the original script, we build the name of the “undone” file with the name of the change file that was used plus a timestamp. This way you have a history of each round of “undones.”

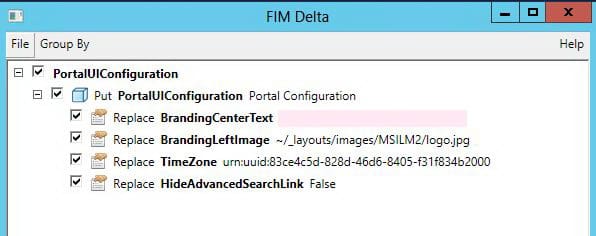

The cool thing is that the undone file is in the same format as the changes file. This means that if you don’t want to examine the XML by hand to see what didn’t make it to production, you can load it up in the delta tool…

The delta tool tells us that we are trying to update the “HideAdvancedSearchLink” (and three other) attributes. The problem was that the MPR that allows this had not been created yet.

In our case, the MPR we needed was in the original changes file (it was just after the update to PortalUIConfiguration) and is now in production. This means we can just submit the undone file back to the CommitChanges script and have success:

![]()

If we take a last look at our diff directory, we will see that we have a nice history of the calculated changes for our “release;” the changes we actually rolled to production; and the changes with which we had difficulty:

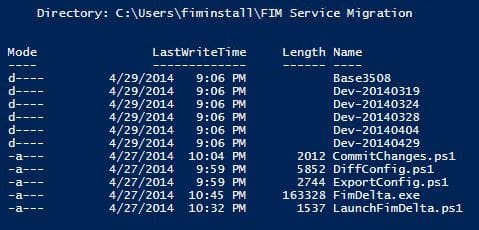

As a final thought (at last), consider that this process does not have to be for production migration only. You can also use it to track progress within a single environment. The next screenshot shows what a development environment might look like with configuration exports taken at various points in time (ignore the file update times – it is a simulation for the blog post, look at the directory names…)

The “Base3508” directory contains an export from a freshly installed and patched to build 3508 version of the FIM Service database. The other directories contain exports of the configuration on the dates as indicated in the file names.

With this information, we can run the DiffConfig process on any two points in time:

- If we want to know what our entire configuration looks like: Run “DiffConfig .\Base3508 .\Dev-20140429”

- If we want to know what changed in the configuration between March 24 and March 28: Run “DiffConfig .\Dev-20140324 .\Dev-20140328”

It works backwards as well:

- If we want to know how to undo the changes that happened between April 4 and March 28: Run “DiffConfig .\Dev-20140404 .\Dev-20140328”

- If we only want to roll back a couple of the changes that occurred between April 4 and March 28, we can do the above, but run the (backward) changes file through the FIM Delta tool and uncheck the stuff we don’t want to roll back.

- If we want to back port some changes that have only been made in production: Run “DiffConfig .\Prod-xxx .\Dev-xxx”. Again, we can use the delta tool to just select the changes we want to back port and not clear out other changes in development that have not yet rolled forward.

While the above process is not perfect, it is a huge improvement over doing stuff all manually. I have found migrations much simpler when using it. I no longer struggle with trying to remember which version of the changes.xml file is sitting in front of me. I no longer cringe when I have to move just some configuration elements from one environment to another. I hope you find the above process and updated FIM Delta tool useful.

Useful links:

Zip file with the updated FIM Delta tool and the PowerShell migration scripts: https://www.css-security.com/fdist/FimServiceMigration1.3.zip

Source Code for the updated FIM Delta tool: https://github.com/RexWheeler/FIMDelta

TechNet article on FIM Configuration Migration: https://technet.microsoft.com/en-us/library/ff400277%28v=ws.10%29.aspx

Microsoft FIM Migration PowerShell scripts: https://technet.microsoft.com/en-us/library/ff400275%28v=ws.10%29.aspx

Documentation for FIM PowerShell Cmdlets: https://technet.microsoft.com/en-us/library/ff394179.aspx